Elon Musk’s X removes general option to report misleading info about politics – TechCrunch

“/>

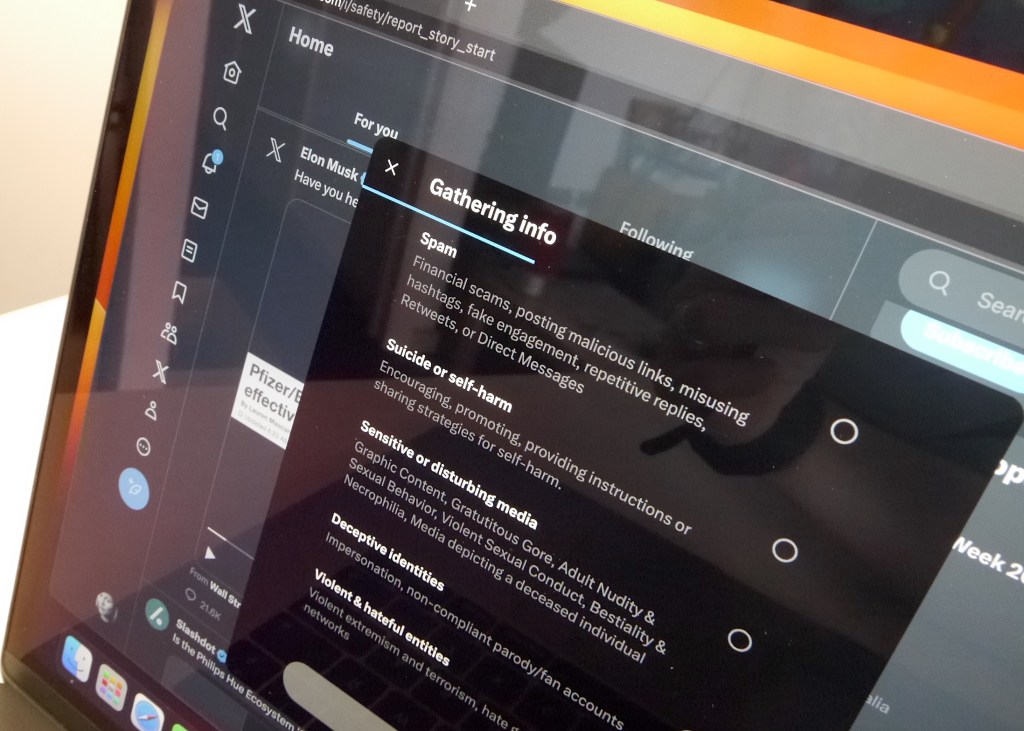

Image Credits: Natasha Lomas/TechCrunch / under a license.

X (formerly Twitter) appears to have removed an option that allowed users in a handful of markets to directly report misleading information about politics.

An Australian digital research group called Reset.Australia spotted the change and posted an open letter (via the Guardian), addressed to X’s country manager, in which it writes:

A recent change to your reporting process appears to have left Australian users unable to report electoral misinformation. This is because the categories for reporting in Australia offer no option to report electoral misinformation. Users are offered inappropriate categories such as hate speech, abuse, spam, imitation etc. Previously Australian users could select ‘It’s misleading’ about ‘Politics’ category. This may leave violative content subject to an inappropriate review process and not labelled or removed in compliance with your policies.

The group warns X that the change could breach Australia’s misinformation code — which it notes requires signatories to enable users to “report content or behaviours to Signatories that violate their policies… through publicly available and accessible reporting tools”.

“X’s Civic Integrity Policy makes clear that electoral misinformation is against your policies (see appendix 2). Users should be able to report this content appropriately,” it adds. The letter also points out the timing of the change comes ahead of a major vote — dubbing it “extremely concerning that Australians would lose the ability to report serious misinformation weeks away from a major referendum”.

TechCrunch has confirmed in our own tests that an option on X to directly report election misinformation no longer appears for users with an IP address located in the US, Australia, Brazil or Spain — which were some of the earliest markets to get the ability to report political misinformation.

Instead users who click on the “report post” option in the drop-down menu attached to each post (i.e. tweet) are presented with options to make reports for the following reasons: Hate; abuse & harassment; violent speech; child safety; privacy; spam; suicide or self harm; sensitive or disturbing media; deceptive identities; violent & hateful entities.

The closest option to misleading information is to make a report for deceptive identities — but the option is focused on account impersonation, including of brands, so looks ill-suited to reporting other types of political misinformation.

The (now removed) misleading information reporting feature was added under Twitter’s former leadership to select markets (including the US) starting in August 2021 — when it was billed as a test — allowing users to report different types of misinformation, including politics/election related and health/COVID-19 related, or something else. The option was then subsequently rolled out in South Korea and the Philippines, tracking electoral activity.

Fast forward to last fall, when new owner Elon Musk took over the company and set about putting his stamp (or sink) on things, and Twitter (now X) quickly stopped enforcing the COVID-19 misleading info policy — noting the change without explanation in one-line update on its official blog: “Effective November 23, 2022, Twitter is no longer enforcing the COVID-19 misleading information policy.”

No such bald line has been made by X regarding ending enforcement on political misinformation. Indeed, last month an unnamed member of “X Safety” claimed in another official blog post that X is committed to combating threats to elections — and was currently expanding its safety and elections teams “to focus on combating manipulation, surfacing inauthentic accounts and closely monitoring the platform for emerging threats”.

The blog post also links directly to X’s “Civic Integrity” rules — which explicitly state:

You may not use X’s services for the purpose of manipulating or interfering in elections or other civic processes. This includes posting or sharing content that may suppress participation or mislead people about when, where, or how to participate in a civic process.

However the blog post flags a series of policy changes which, if you dig into the detail, confirm that under Musk the platform’s T&Cs are applying a particular definition of civic integrity that does not broadly prohibit misleading information about politics.

The “Civic Integrity” policy prohibitions, which are also only in place for a limited time before and after political elections, censuses and “major” referenda and ballot initiatives, are focused on a few specific areas — namely: Misleading info about how to participate in a vote; attempts to suppress participation, including via intimidation; and false or misleading affiliation.

There is explicitly no ban on general inaccurate statements about elected or appointed officials, candidates, or political parties — indeed, that kind of political misinformation is essentially treated as ‘freedom of expression’ by Musk. (The blog post stipulates the platform’s intent is to “strike the right balance between tackling the most harmful types of content — those that could intimidate or deceive people into surrendering their right to participate in a civic process — and not censoring political debate”.)

The post also points to an April update to X’s “enforcement philosophy” — where it set out a preference to restrict the reach of posts that violate its policies by making the content less discoverable, rather than taking the posts down. And to add labels where this process of so-called “visibility filtering” has been applied.

Given this approach it’s not surprising to see Musk remove the legacy option to reporting misleading information about politics — which might generate lots of reports X won’t act on.

However, if X’s actions are to reflect its stated “Civic Integrity” policies, you would have expected it to add a direct option for users to report election misinformation — and, under that, offer additional options where users can report attempts at voter suppression/intimidation etc — even if the ability to make these kinds of reports were only to appear close to the date of a relevant poll being held.

But Reset.Australia’s point is that hasn’t happened — with just over two weeks to go until the 2023 Indigenous Voice referendum (which takes place on October 14).

As noted above the option for X users to report account impersonation is present — and could serve for reporting candidate or political party impersonation — but there’s no direct way for users in Australia to report posts that seek to interfere with the election at present.

Meanwhile, the scale of disinformation on X also appears to be getting worse under Musk.

Yesterday the European Union reported on pilot research conducted by participants in its Code of Practice on tackling disinformation that found X’s platform to have the worst ratio of disinformation/misinformation posts to other major platforms in three EU Member States.

A NewsGuard report also found that Musk’s decision to remove labels denoting state-run or government-affiliated media accounts led to a surge in Russian, Chinese and Iranian propaganda — aka, the sort of disinformation that’s often aimed at democratic elections.

One curve ball here is that Musk has actually expanded election misinformation reporting options — but only for users in the EU (a bloc of countries which includes Spain, where it has also removed the direct option to report misleading info about politics from the main “report post” menu).

So while X users in Spain (i.e. inside the EU) no longer see that direct political misinformation reporting option when they click on “report post” they do see an additional option at the top level of the menu — to “report EU illegal content” — where they are able to report election misinformation or other types of “negative effects” on civic discourse.

This recently added reporting is intended to let EU users make reports under a new set of pan-EU content moderation and governance rules, called the Digital Services Act (DSA) — which carries major penalties for infringements (of up to 6% of global annual turnover).

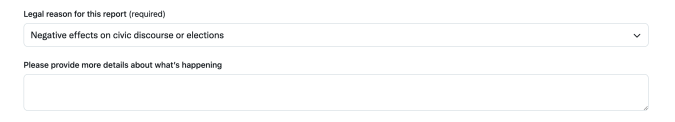

As such, there are a number of steps involved in making this type of report (and it’s a bit more long-winded than the direct reporting options) — including needing to provide some contact details; specifying which EU country’s laws the content being reported is breaching; and picking from a list of 14 options presented under the “legal reason” drop-down menu — which includes the aforementioned option to report “negative effects on civic discourse or elections” (which should cover reports concerning political misinformation).

The DSA form also offers a free text field where users can “provide more details about what’s happening”:

Screengrab: Natasha Lomas/TechCrunch

This “report EU illegal content” option means that even though X has removed a direct option to report misleading political info in the main “report post” menu it has technically — since the end of last month when the DSA came into force for larger platforms such as X — expanded the ability to report this type of content to all users in the EU (i.e. not just Spain).

Although it remains to be seen how the platform will handle reports of illegal content it receives from EU users. (Notably, for example, X is facing several legal procedures in Germany where it’s been accused of failing to comply with German speech laws requiring it to take down illegal hate speech. So it may seek to limit how it acts on users reports of posts with “negative effects” on civic discourse or elections — but will need to justify its response to EU regulators with the risk of big fines if it strikes the wrong balance.)

X under Musk is clearly only providing the option for EU users to report political misinformation in response to the new pan-EU law — which regulates how platforms must respond to certain types of problematic content, including by providing users with the ability to make reports.

The DSA also requires larger platforms, including X, to assess and mitigate systemic risks — which includes disinformation. So it has a legal duty not to prevent or ignore user reports.

Countries and regions that don’t have comparable legal requirements to the EU’s DSA — such as the US — are, perhaps unsurprisingly (given Musk’s penchant for painting himself as a champion of free speech), no longer being provided with any direct option on X to report political misinformation.

Albeit, the lack of any obvious way for X users outside the EU to report election-related misinformation (at least) does look out of step with the policies Musk’s company claims it’s applying.

We emailed X’s press office regarding the removal of a direct option for users to report misleading information about politics, asking how the move squares with its wider claims to be investing in election integrity (repeated by CEO Linda Yaccarino in an interview with the Financial Times today) — but the company did not engage with our questions — just firing out its latest empty auto-reply which states: “Busy now, check back later.”

Source: News